What is STRESS Testing in Software Testing? Tools, Types, Examples

Stress testing, a vital software testing approach, aims to assess the stability and reliability of a software application. This testing methodology scrutinizes the software’s robustness and its ability to handle errors when subjected to exceptionally heavy loads. The primary objective is to ensure that the software remains stable and does not crash even in demanding situations. Stress testing goes beyond normal operating conditions, pushing the software to its limits and evaluating its performance under extreme scenarios. In the realm of Software Engineering, Stress Testing is synonymous with Endurance Testing.

During Stress Testing, the Application Under Test (AUT) is deliberately subjected to a brief period of intense load to gauge its resilience. This testing technique is particularly valuable for determining the threshold at which the system, software, or hardware may fail. Additionally, Stress Testing examines how effectively the system manages errors under these extreme conditions.

As an example, consider a scenario where a Stress Test involves copying a substantial amount of data (e.g., 5GB) from a website and pasting it into Notepad. Under this stress, Notepad exhibits a ‘Not Responding’ error message, indicating its inability to handle the imposed load effectively. This type of stress scenario helps assess the application’s performance under extreme conditions and its error management capabilities.

Need for Stress Testing:

The need for stress testing in software development arises from several critical considerations, and it plays a crucial role in ensuring the robustness and reliability of a software application.:

-

Assessing System Stability:

Stress testing helps evaluate the stability of a system under extreme conditions. It identifies potential points of failure and ensures that the system remains stable and responsive even when subjected to heavy loads.

-

Identifying Performance Limits:

By pushing the system beyond its normal operational limits, stress testing helps identify the maximum capacity at which the software, hardware, or network infrastructure can function. This information is valuable for capacity planning and scalability analysis.

-

Verifying Error Handling:

Stress testing assesses how well the software handles errors and exceptions under extreme loads. It helps identify and rectify issues related to error messages, system crashes, or unexpected behavior, ensuring a more robust application.

-

Detecting Memory Leaks:

Intensive stress testing can reveal memory leaks and resource-related issues. Identifying and addressing these concerns is crucial to prevent performance degradation over time and enhance the overall reliability of the application.

-

Ensuring Availability Under Pressure:

Stress testing simulates scenarios where the system experiences a sudden surge in user activity, ensuring that the application remains available and responsive even during peak usage periods.

-

Meeting User Expectations:

Users expect software applications to perform reliably under varying conditions. Stress testing helps ensure that the application meets or exceeds these expectations, providing a positive user experience even when the system is under stress.

-

Preventing Downtime and Failures:

By uncovering performance bottlenecks and weak points in the system, stress testing helps prevent unexpected downtime and failures in a production environment. This proactive approach minimizes the risk of disruptions and associated business impacts.

-

Enhancing System Resilience:

Stress testing contributes to building a more resilient system by exposing it to challenging conditions. Applications that can withstand stress are better equipped to handle unexpected spikes in traffic or usage.

-

Meeting Quality Assurance Standards:

Stress testing is a crucial aspect of quality assurance, ensuring that software applications adhere to performance standards and comply with industry best practices. It enhances the overall quality and reliability of the software.

-

Gaining Confidence in Deployments:

By conducting thorough stress testing before deployment, development teams and stakeholders gain confidence in the system’s ability to handle real-world scenarios. This confidence is essential for successful software rollouts.

-

Improving Customer Satisfaction:

When software performs well under stress, it contributes to a positive user experience. This, in turn, improves customer satisfaction, fosters trust in the application, and enhances the reputation of the software.

-

Supporting Business Continuity:

Stress testing is instrumental in ensuring business continuity by minimizing the likelihood of unexpected system failures or disruptions. This is particularly important for mission-critical applications.

Goals of Stress Testing:

The goals of stress testing in software development are focused on evaluating how a system performs under extreme conditions and identifying its breaking points.

-

Assessing System Stability:

Evaluate the stability of the system under heavy loads, ensuring that it can handle intense stress without crashing or becoming unresponsive.

-

Determining Maximum Capacity:

Identify the maximum capacity of the system in terms of users, transactions, or data volume. Understand the point at which the system starts to exhibit performance degradation.

-

Verifying Scalability:

Assess how well the system scales in response to increasing loads. Determine whether the application can handle a growing number of users or transactions while maintaining acceptable performance.

-

Evaluating Error Handling:

Test the system’s error handling capabilities under stressful conditions. Verify that the application effectively manages errors, provides appropriate error messages, and gracefully recovers from unexpected situations.

-

Detecting Performance Bottlenecks:

Identify performance bottlenecks, such as slow response times or resource limitations, that may impact the overall performance of the system under stress.

-

Testing Beyond Normal Operating Points:

Push the system beyond normal operating conditions to evaluate its behavior under extreme scenarios. This includes testing with higher-than-expected user loads, data volumes, or transaction rates.

-

Assessing Recovery Capabilities:

Evaluate how well the system recovers from stress-induced failures. Measure the recovery time and effectiveness of the system in returning to a stable state after encountering extreme conditions.

-

Validating Resource Utilization:

Examine the utilization of system resources, such as CPU, memory, and network bandwidth, under stress. Ensure that the application optimally uses resources without leading to resource exhaustion.

-

Preventing Memory Leaks:

Identify and address potential memory leaks or resource-related issues that may occur when the system is subjected to prolonged stress. Ensure that the application maintains performance over extended periods.

-

Ensuring Availability Under Peak Load:

Verify that the application remains available and responsive even during peak loads or unexpected spikes in user activity. Assess the system’s ability to handle high traffic without compromising performance.

-

Meeting Service Level Agreements (SLAs):

Ensure that the system’s performance aligns with the defined Service Level Agreements (SLAs). Validate that response times and availability meet the specified criteria under stress.

-

Enhancing Reliability and Robustness:

Strengthen the overall reliability and robustness of the system by exposing it to challenging conditions. Identify and address weaknesses to build a more resilient application.

-

Supporting Business Continuity:

Contribute to business continuity by minimizing the risk of unexpected system failures or disruptions. Ensure that the application remains stable even when subjected to stress.

-

Improving User Experience:

Enhance the user experience by ensuring that the application maintains acceptable performance and responsiveness, even when facing high levels of stress.

Load Testing Vs. Stress Testing:

| Aspect | Load Testing | Stress Testing |

| Objective | Evaluate the system’s behavior under expected loads. | Assess the system’s stability and performance under extreme conditions beyond its capacity. |

| Purpose | Ensure the application can handle typical user loads. | Identify breaking points, bottlenecks, and weaknesses under stress, pushing the system to its limits. |

| Load Levels | Gradually increase user load to simulate normal conditions. | Apply an intense and excessive load to determine the system’s breaking point. |

| Duration | Conducted for an extended period under normal conditions. | Applied for a short duration with an intense and peak load. |

| Scope | Tests within expected operational parameters. | Tests beyond normal operating points to assess the system’s robustness. |

| User Behavior | Simulates typical user behavior and usage patterns. | Simulates extreme scenarios, often with higher loads than expected in real-world use. |

| Goal | Optimize performance, identify bottlenecks, and ensure reliability under typical usage. | Identify system limitations, assess error handling under stress, and evaluate system recovery. |

| Outcome Analysis | Focuses on response times, throughput, and resource utilization under normal conditions. | Examines how the system behaves at or beyond its limits, assessing failure points and recovery capabilities. |

| Failure Point | Typically, the failure point is not the main focus. | Identifying the system’s breaking point and understanding its failure characteristics is a primary objective. |

| Scalability | Assesses the system’s scalability and ability to handle a growing number of users. | Tests the system’s scalability but focuses on determining its breaking point and how it handles stress. |

| Examples | Testing an e-commerce website under expected user traffic. | Simulating a sudden surge in user activity to observe how the system copes under extreme loads. |

Types of Stress Testing:

Stress testing comes in various forms, each targeting specific aspects of a system’s performance under extreme conditions. Here are different types of stress testing:

- Peak Load Testing:

- Objective: Evaluate how the system performs under the highest expected load.

- Scenario: Simulate peak usage conditions to identify any performance bottlenecks and assess the system’s response to heavy traffic.

- Volume Testing:

- Objective: Assess the system’s ability to handle a large volume of data.

- Scenario: Populate the database with a significant amount of data to measure how the system manages and retrieves information under stress.

-

Soak Testing (Endurance Testing):

- Objective: Evaluate system stability over an extended period under a consistent load.

- Scenario: Apply a sustained load for an extended duration to uncover issues related to memory leaks, resource exhaustion, or degradation over time.

-

Scalability Testing:

- Objective: Assess how well the system scales with increased load.

- Scenario: Gradually increase the user load to evaluate the system’s capacity to handle growing numbers of users, transactions, or data.

-

Spike Testing:

- Objective: Evaluate the system’s response to sudden, extreme increases in load.

- Scenario: Simulate rapid spikes in user activity to identify how well the system handles abrupt surges in traffic.

-

Adaptive Testing:

- Objective: Dynamically adjust the load during testing to assess the system’s ability to adapt.

- Scenario: Vary the user load in real-time to mimic unpredictable fluctuations in demand and observe how the system adjusts.

-

Negative Stress Testing:

- Objective: Evaluate the system’s behavior when subjected to loads beyond its specified limits.

- Scenario: Apply excessive loads or perform actions that exceed the system’s capacity to understand failure points and potential consequences.

-

Resource Exhaustion Testing:

- Objective: Identify how the system handles resource constraints and exhaustion.

- Scenario: Gradually increase the load until system resources (CPU, memory, disk space) are exhausted to observe the impact on performance.

-

Breakpoint Testing:

- Objective: Determine the exact point at which the system breaks or fails.

- Scenario: Incrementally increase the load until the system reaches a breaking point, helping identify its limitations and weaknesses.

-

Distributed Stress Testing:

- Objective: Evaluate the system’s performance in a distributed or multi-server environment.

- Scenario: Distribute the load across multiple servers or locations to simulate a geographically dispersed user base and assess overall system behavior.

-

Application Component Stress Testing:

- Objective: Focus stress testing on specific components or modules of the application.

- Scenario: Stress test individual components (e.g., APIs, database queries) to identify weaknesses or limitations in specific areas.

-

Network Stress Testing:

- Objective: Assess the impact of network conditions on system performance.

- Scenario: Introduce variations in latency, bandwidth, or network congestion to evaluate how the system responds under different network conditions.

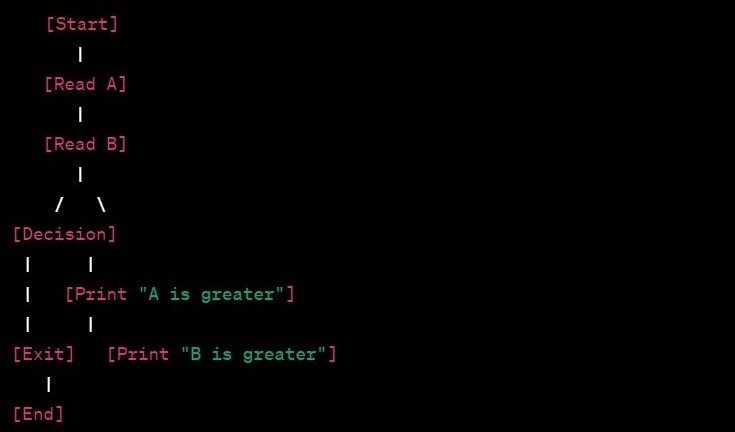

How to do Stress Testing?

Stress testing involves subjecting a software system to extreme conditions to evaluate its robustness, stability, and performance under intense loads.

-

Define Objectives and Scenarios:

Clearly define the objectives of the stress testing. Identify the specific scenarios you want to simulate, such as peak loads, sustained usage, or sudden spikes in user activity.

-

Identify Critical Transactions:

Determine the critical transactions or operations that are essential for the application’s functionality. Focus on areas that are crucial for the user experience or have a high impact on system performance.

-

Select Stress Testing Tools:

Choose appropriate stress testing tools based on your requirements and the technology stack of the application. Popular tools include Apache JMeter, LoadRunner, Gatling, and others.

-

Create Realistic Test Scenarios:

Develop realistic test scenarios that mimic the expected usage patterns of real users. Consider factors such as the number of concurrent users, data volume, and transaction rates.

-

Configure Test Environment:

Set up a test environment that closely resembles the production environment. Ensure that hardware, software, and network configurations match those of the actual deployment environment.

-

Execute Gradual Load Increase:

Begin the stress test with a gradual increase in user load. Monitor the system’s performance metrics, including response times, throughput, and resource utilization, as the load increases.

-

Apply Extreme Loads:

Introduce extreme loads to simulate peak conditions, sustained usage, or unexpected spikes in user activity. Stress the system beyond its expected capacity to identify breaking points and weaknesses.

-

Monitor System Metrics:

Continuously monitor and collect relevant system metrics during the stress test. Key metrics include CPU usage, memory consumption, network activity, response times, and error rates.

-

Analyze Results in Real-Time:

Analyze stress test results in real-time to identify performance bottlenecks, errors, or anomalies. Use the insights gained to make adjustments to the test scenarios or configuration settings.

-

Assess Recovery and Error Handling:

Intentionally induce failures or errors during stress testing to assess how well the system recovers. Evaluate error messages, logging, and the overall system behavior under stress-induced errors.

-

Perform Soak Testing:

Extend the duration of the stress test to perform soak testing. Observe the system’s stability over an extended period and check for issues related to memory leaks, resource exhaustion, or gradual degradation.

-

Document Findings and Recommendations:

Document the findings from the stress test, including any performance issues, bottlenecks, or failure points. Provide recommendations for optimizations or improvements based on the test results.

-

Iterate and Optimize:

Iterate the stress testing process, making adjustments to scenarios, configurations, or the application itself based on the identified issues. Optimize the system to enhance its resilience under stress.

-

Review and Validate Results:

Review stress test results with stakeholders, development teams, and other relevant parties. Validate the findings and ensure that the necessary improvements are implemented.

-

Repeat Regularly:

Conduct stress testing regularly, especially after implementing optimizations or making significant changes to the application. Regular stress testing helps ensure continued robustness and performance.

Tools recommended for Stress Testing:

Apache JMeter:

An open-source Java-based tool for performance testing and stress testing. It supports a variety of applications, protocols, and server types.

- Website: Apache JMeter

LoadRunner:

A performance testing tool from Micro Focus that supports various protocols, including HTTP, HTTPS, Web, Citrix, and more. It is known for its scalability and comprehensive testing capabilities.

- Website: Micro Focus LoadRunner

Gatling:

An open-source, Scala-based tool for load testing. It is designed for ease of use and supports protocols like HTTP, WebSockets, and JMS.

- Website: Gatling

k6:

An open-source, developer-centric performance testing tool that supports scripting in JavaScript. It is designed for simplicity and integrates well with CI/CD pipelines.

- Website: k6

Artillery:

An open-source, modern, and powerful load testing toolkit. It allows users to define test scenarios using YAML or JavaScript and supports HTTP, WebSocket, and other protocols.

- Website: Artillery

Locust:

An open-source, Python-based load testing tool. It emphasizes simplicity and flexibility, allowing users to define user scenarios using Python code.

- Website: Locust

Tsung:

An open-source, Erlang-based distributed load testing tool. It supports various protocols and is designed for scalability and performance testing of large systems.

- Website: Tsung

BlazeMeter:

A cloud-based performance testing platform that leverages Apache JMeter. It provides scalability, collaboration features, and integrations with CI/CD tools.

- Website: BlazeMeter

io:

A cloud-based load testing service that allows users to simulate traffic to their web applications. It provides simplicity and ease of use for quick stress testing.

- Website: io

Neoload:

A performance testing platform that supports a wide range of technologies and protocols. It offers features like dynamic infrastructure scaling and collaboration capabilities.

- Website: Neoload

LoadImpact:

A cloud-based load testing tool that allows users to create and run performance tests from various global locations. It offers real-time analytics and supports APIs, websites, and mobile applications.

- Website: LoadImpact

Metrics for Stress Testing:

Metrics for stress testing help assess how well a software system performs under extreme conditions and identify areas for improvement.

-

Response Time:

The time taken for the system to respond to a user request. Evaluate how quickly the system can process and respond to requests under stress.

-

Throughput:

The number of transactions or requests processed by the system per unit of time. Measure the system’s capacity to handle a high volume of transactions simultaneously.

-

Error Rate:

The percentage of requests that result in errors or failures. Identify the point at which the system starts to produce errors and evaluate error-handling capabilities.

-

Concurrency:

The number of simultaneous users or connections the system can handle. Assess the system’s ability to support concurrent users and determine the point of concurrency saturation.

-

Resource Utilization:

The percentage of CPU, memory, network, and other resources consumed by the system. Identify resource bottlenecks and ensure optimal utilization under stress.

-

Transaction Rate:

The number of transactions processed by the system per second. Measure the rate at which the system can handle transactions and identify any performance degradation.

-

Latency:

The time delay between sending a request and receiving the corresponding response. Evaluate the system’s responsiveness and identify delays under stress.

-

Scalability:

The ability of the system to handle increased load by adding resources. Assess how well the system scales with additional users, transactions, or data.

-

Peak Load Capacity:

The maximum load the system can handle before performance degrades significantly. Determine the system’s breaking point and understand its limitations.

- Recovery Time:

The time taken by the system to recover after exposure to a stress-induced failure. Assess how quickly the system can recover and resume normal operation.

- Abort Rate:

The percentage of transactions that are aborted or terminated prematurely. Identify the point at which the system can no longer handle incoming requests and starts to abort transactions.

-

Distributed System Metrics:

Metrics specific to distributed systems, such as data consistency, communication latency, and message delivery times. Evaluate the performance and stability of distributed components under stress.

-

Content Delivery Metrics:

Metrics related to the delivery of content, including load times for images, scripts, and other resources. Assess the impact of stress on the delivery of multimedia content and user experience.

-

Network Metrics:

Metrics related to network performance, including latency, bandwidth usage, and packet loss. Evaluate how well the system performs under different network conditions during stress testing.

Example of Stress Testing:

Scenario: E-Commerce Website Stress Testing

- Objective:

Assess the performance, stability, and scalability of the e-commerce website under stress. Identify the breaking point and measure the impact on response times, throughput, and error rates.

-

Test Environment:

Set up a test environment that mirrors the production environment, including hardware, software, and network configurations.

-

Test Scenarios:

Define stress test scenarios that simulate different usage patterns, including peak loads, sustained usage, and sudden spikes in user activity.

-

User Activities:

Simulate user activities such as browsing product pages, adding items to the cart, completing purchases, and navigating between pages.

-

Transaction Mix:

Define a mix of transactions, including product searches, page views, cart modifications, and order placements, to represent realistic user behavior.

-

Gradual Load Increase:

Begin the stress test with a low number of concurrent users and gradually increase the load over time to observe how the system responds.

-

Peak Load Testing:

Introduce scenarios that simulate peak loads during specific events, such as promotions or product launches, to assess the application’s performance under extreme conditions.

-

Spike Testing:

Simulate sudden spikes in user activity to evaluate how the system handles abrupt increases in traffic.

-

Sustained Load Testing:

Apply a sustained load for an extended period to assess the stability of the system over time and identify any issues related to memory leaks or resource exhaustion.

-

Monitor Metrics:

Continuously monitor key performance metrics, including response times, throughput, error rates, CPU utilization, memory usage, and network activity.

-

Error Scenarios:

Introduce error scenarios, such as intentionally providing incorrect payment information or attempting to process transactions with insufficient stock, to evaluate error-handling capabilities.

-

Concurrency Testing:

Increase the number of concurrent users to assess the system’s concurrency limits and identify when response times start to degrade.

-

Resource Utilization:

Analyze resource utilization metrics to identify potential bottlenecks and ensure optimal use of CPU, memory, and network resources.

-

Recovery Testing:

Intentionally induce failures, such as temporary server outages or database connection issues, to assess how well the system recovers and resumes normal operation.

-

Documentation:

Document the stress test results, including any performance issues, breaking points, recovery times, and recommendations for optimization.

Expected Outcomes:

- Identify the maximum number of concurrent users the e-commerce website can handle before performance degrades significantly.

- Determine the impact of stress on response times, throughput, and error rates.

- Assess the system’s ability to recover from stress-induced failures.

- Provide insights and recommendations for optimizing the application’s performance and scalability.

Disclaimer: This article is provided for informational purposes only, based on publicly available knowledge. It is not a substitute for professional advice, consultation, or medical treatment. Readers are strongly advised to seek guidance from qualified professionals, advisors, or healthcare practitioners for any specific concerns or conditions. The content on intactone.com is presented as general information and is provided “as is,” without any warranties or guarantees. Users assume all risks associated with its use, and we disclaim any liability for any damages that may occur as a result.